Understanding Social Digital Responsibility

In this post, we explore how Social Digital Responsibility practices can improve an organization’s relationships with people, communities, and society overall.

In Netflix’s documentary, The Social Dilemma, Silicon Valley executive Tim Kendall, who worked at Facebook and Pinterest, tells the audience that the most immediate thing he’s concerned about regarding misinformation and “fake news” on social media is civil war.

For his article, Platforms Must Pay for Their Role in the Insurrection, journalist Roger McNamee expressed a similar sentiment:

In their relentless pursuit of engagement and profits, these platforms created algorithms that amplify hate speech, disinformation, and conspiracy theories. This harmful content is particularly engaging and serves as the lubricant for businesses as profitable as they are influential.

— Roger McNamee, Wired

These platforms also enforce their terms of service in ways that favor extreme speech and behavior, predominantly right-wing extremism.

As technology became more and more present in our daily lives, we let Surveillance Capitalism worm its way into everything we do. Engagement and interaction drive Big Tech algorithms, not truth or altruism.

They make their money when users click and comment. Whether someone sees the light regarding the earth’s shape, the efficacy of a vaccine, or the validity of an election is irrelevant.

And that’s a big problem.

Not Just Fake News

While conspiracy theories and misinformation understandably get the lion’s share of press coverage, there are other ways that digital products, services, and practices undermine our social fabric as well. For example, what if:

- Your organization uses an AI-powered hiring tool that inadvertently discriminates against women or people of color for digital positions within your company?

- Personal information about your nonprofit’s donors or volunteers gets breached and leaked via a website hack? (If your nonprofit deals with sensitive or controversial issues, this could be particularly problematic.)

- The company’s employee benefits portal doesn’t work for people with disabilities who use assistive technology, undermining their ability to access critical, health-related information and putting lives at risk?

Unfortunately, issues like these occur daily across organizations large and small. And there are real consequences. As a Certified B Corp, we’re committed to making better business decisions that benefit our stakeholders. As a digital agency that designs technology solutions every day, we also want to prevent unintended consequences and help our clients run more impactful organizations.

With that in mind, how can responsible leaders ensure that their digital policies are used for good? What specific practices will ensure that your organization’s digital choices are responsible and in society’s best interest? Let’s figure that out.

We’ve been designing giant world-wide networks that manage personal relationships, generate abuse and harassment, and can’t tell (or don’t care about) the difference between a good or a bad actor. We’re happy to have Nazis on our platforms because they count as engagement. We’re happy to let people post the addresses of parents of slain children because we can sell ads against it. We’re also designing “smart” devices that can listen to and watch everything we do in our homes.

— Mike Monteiro, Ruined by Design

These things are being designed by people who have no idea what their professional ethical code is, or any recourse to deal with designers who break it.

Defining Social Digital Responsibility

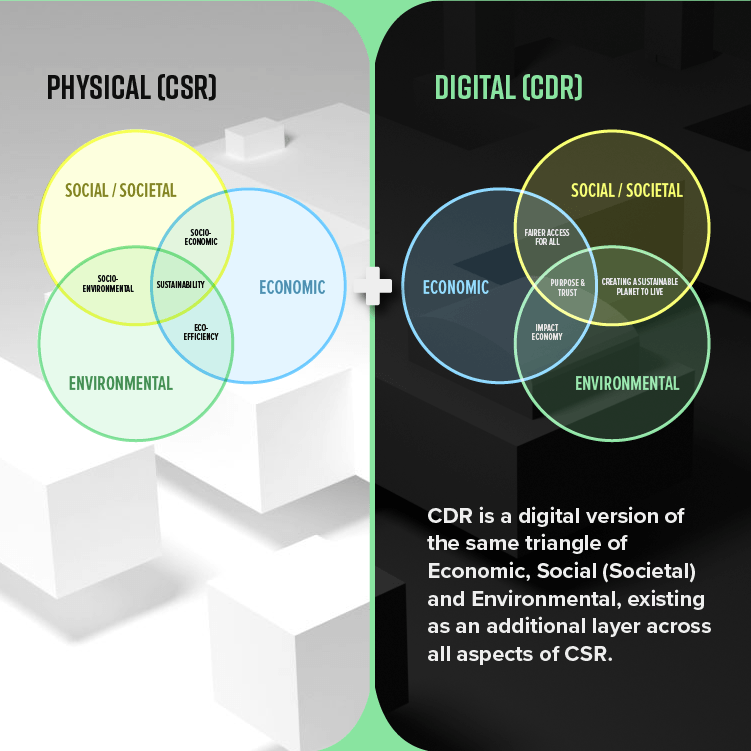

Social Digital Responsibility—one of three subsets of Corporate Digital Responsibility—addresses how your organization uses digital products, services, programs, or practices to benefit society. It is part of a holistic approach to digital sustainability as well.

In other words, how do you use digital to create and nurture thriving, productive relationships with employees, customers, communities, and other stakeholders?

Your approach to social digital responsibility plays a key role in your brand identity, customer relationships, hiring practices, and many other touch points throughout your organization. It is core to how you communicate and socialize online.

Social digital responsibility practices also impact many stakeholders. Because of this, you should clearly understand how your decisions might impact each group. Moreover, digital tools can help employees, customers, and communities more effectively interact with your organization. Opportunities will arise across departments and disciplines to improve these relationships.

As our technology becomes infinitely more powerful, there are also increasingly serious ethical concerns. We will have to come to some consensus on issues like what accountability a machine should have and to what extent we should alter the nature of life.

— Greg Satell, Digital Tonto

Social Digital Responsibility Practices for your Organization

These issues are often complicated and messy. Technology changes quickly. Many times, the way forward is not clear or obvious, and we learn consequences only after-the-fact.

So what can we do? Here are a few ideas. This list is by no means comprehensive, but it’s a place to start.

Fight Misinformation and Disinformation

First and foremost, responsible nonprofit and business leaders must fight misinformation across all channels and platforms with which our organizations interact. If you don’t already have quality assurance (QA) processes for creating and sharing information, it’s time to craft some.

Here are several tangible things your organization can do to support truth and fight misinformation:

- Fact-check all messaging for every channel. This will require extra time or maybe a dedicated content moderator, but your efforts will pay off. Who wants to learn after the fact that they have misinformed stakeholders, or worse, incited violence or sown misplaced discontent?

- Respond quickly and honestly to online criticism. Sometimes you mess up and make mistakes. People get angry. Cancel culture is a thing. The longer you wait to respond to criticism or correct misinformation, the more widespread damage can be done to your reputation. When you respond, be honest, own your mistakes, and outline how you plan to rectify the situation. The internet has a funny way of sniffing out disingenuous claims. Make truth your North Star.

- Support content moderation legislation. Bills introduced in the U.S. and abroad could hold tech and social media companies accountable for the content on their platforms. Your organization can get involved in multiple ways, including signing petitions or, better yet, actively lobby legislators to enact these laws.

- Don’t participate. While the idea of deleting social media accounts might seem extreme, it is possible to run your organization without them, as fellow B Corp Keap Candles did.

Trackers hidden on the vast majority of websites collect as much information about us as possible and try to link that data to our actions online as well as off, typically to send us targeted ads. The idea behind Global Privacy Control would be to place a setting on your browser that tells every site you visit that you don’t want your data to be sold or shared with anyone else, and websites would have to respect your wishes.

— Sara Morrison, Vox

Data Privacy and Protection

If enacted, users can automatically opt-out of having their data sold or shared at every website they visit under Global Privacy Control. Users currently have to do this on a site-by-site basis, which is time-consuming and frustrating. Global Privacy Control could significantly impact how organizations conduct their digital marketing.

Providing users offer consent, your organization is responsible for protecting their data and ensuring its privacy. This includes employees, customers, partners, or anyone who might be adversely impacted by a breach.

There are some critical things every organization should do to respect customer and end-user right to privacy:

- Use tools that respect privacy. Find alternatives to Surveillance Capitalism solutions. App Tracking Transparency in iOS 14, for example, allows users to control which apps can share your data. Similarly, tools like Privacy Badger will monitor, learn, and block invisible trackers in your browser. Encourage the use of these tools in your organization as well as with partners.

- Protect collected data. Maintain software so that it is always up-to-date, backup databases, and regularly audit and update content management systems, CRMs, and other online or cloud-based software for security vulnerabilities. In addition, change passwords often. Depending on the information you collect, failure to do these things could be disastrous.

- Abide by privacy laws. Follow guidelines outlined by legislation like the General Data Protection Regulation (GDPR) in Europe, New York’s SHIELD Act, or California’s Consumer Privacy Act (CCPA). Here’s a checklist that should cover most situations.

- Get Content Security Policy (CSP). Hackers are everywhere, and it’s only a matter of time before they hack your website or product. That’s just a simple fact. Add Content Security Policy to your website to restrict how resources such as JavaScript, CSS, or other elements load when visitors use your site. CSP can also help guard from cross-site scripting (XSS) attacks and Clickjacking.

- Offer easy opt-out and data removal options. It’s not illegal to collect data providing you get permission to do so. Similarly, customers should be in control of what you do with their data and when said data is removed from your systems. Provide easy options to facilitate this.

- Train your stakeholders. Finally, employees, vendors, partners, or other relevant stakeholders should be trained on data security and privacy issues as well as company policies and best practices to ensure data integrity over time.

To decrease the digital divide gap, we must tackle the problems of poverty, low education levels, and poor infrastructure.

— Carmen Steele, Digital Divide Council

Digital JEDI Practices

The term “digital divide” can cover topics from rural access to broadband to technology access in (or out of) the classroom. The heart of this issue, however, is that people from marginalized or underrepresented communities often have less access to technology and decreased opportunities to advance digital skills. Unfortunately, this is compounded greatly by systemic racism, ableism, gender bias, and so on.

To combat these systemic issues, we can start with our own organizations. Within companies or nonprofit organizations, justice, equity, diversity, and inclusion (JEDI) practices are typically the purview of human resources departments. Digital, however, doesn’t often have its own department within an organization. When you combine these two important principles, many opportunities arise to improve organizational practices across departments and disciplines.

Here are a few ideas to get you started:

- Employ blind hiring practices for digital positions. Increasingly, online hiring platforms offer the ability to anonymize applicant identity characteristics when organizations fill new positions. This can reduce unconscious bias that might occur during the hiring process. Similarly, aligning your organization with partners that provide digital skills to underrepresented communities through internships or mentoring can open opportunities that help everyone.

- Represent diversity in user research that reflects society. Digital-native organizations employ user research and testing tactics to better understand who their digital products and services serve. When diverse perspectives are removed from this process, bad choices occur. These choices can lead to unintended consequences such as those mentioned earlier in this post. Map your stakeholders and include diverse perspectives in the creation process. You will get better solutions as a result.

- Re-train employees who could be replaced by automation. If your organization plans to invest in emerging technologies such as blockchain, AI, IoT, or the like, earmark part of that budget for re-training existing employees who could be impacted by those decisions.

- Support inclusive legislation. Discrimination in any form is a civil rights issue that should be backed up by common sense legislation. Bullying, hate speech, and harassment occur regularly across today’s globally networked platforms. Your organization can take an active stance against this by signing petitions and joining industry or sector alliances that hold these platforms accountable and support inclusive regulations. Better yet, engage legislators in-person. They appreciate hearing from local businesses and listening to your concerns is literally their job—just do your homework first.

Access to Information

The internet is vital for access to information, jobs, and education. It improves workers’ rights, ensures freedom of expression, and should be a human right. This is especially true in the age of COVID-19.

Here are two things you can do today to support universal access to information within your organization:

- Follow accessibility and inclusion guidelines for all digital products and services. Your website, mobile apps, and any digital products or services should be accessible to users of any ability and those with low bandwidth or using slower devices. Follow the W3C’s Web Content Accessibility Guidelines for users with disabilities and Sustainable Web Design principles to create fast, efficient digital products that work well on any device or connection.

- Support open internet access for all. Campaigns like the World Wide Web Foundation’s Contract for the Web put internet access and openness at the heart of an ongoing global campaign, but that’s not the only way to get involved.

The advances in the use/reuse of data and digital technologies have produced multiple advantages and disadvantages while permeating all aspects of daily life to the extent that the risk of using ML/AI-enabled applications has become normalized. This has led to little questioning by users of what data is collected, for what purpose, and how this informs technological advances or decisions made about them. Indeed, despite regulations such as GDPR, the Digital Services Act, and the EU AI Act, which require organizations to be more transparent about ML/AI and data use to make users aware of cyber privacy and security issues.

— IEEE, Corporate Digital Responsibility (CDR) Securing Our Digital Futures

How AI Impacts Social Digital Responsibility

The rapid rise of Generative AI offers opportunities to redesign common business and marketing practices to improve efficiency and problem-solving. It also drives multiple data inequality issues and both environmental and ethical challenges. Here are just a few examples:

- Disinformation: Gen AI tools make it easy to create and distribute convincing false narratives and media.

- Algorithmic bias: Algorithm-driven business tools—hiring platforms, for example—are known to discriminate due to data and representation gaps when training their Large Language Models (LLMs).

- Copyright: Plus, AI companies use intellectual property to train LLMs without compensating owners.

- Data privacy: Similarly, data privacy issues associated with AI present significant legal challenges with constantly evolving privacy legislation.

- Sustainability: Finally, AI uses huge amounts of energy, water, land, and other resources which can lead to social justice issues, like community displacement.

To make artificial intelligence more ethical and sustainable, we need to address the issues above through meaningful legislation, improved standards, and industry awareness and training. In the meantime, we recommend creating a clear Code of Ethics and/or AI policy to govern its use within your organization.

[Who owns CDR in your organization?] When the answer is everyone, the responsibility can sometimes fall between the gaps, especially during times when everyone’s attention is necessarily elsewhere (i.e. a global pandemic).

— Rob Price, Whose Responsibility is it Anyway?

Responsible Tech Advocacy Toolkit

Advocate for responsible tech policies that support stakeholders with this resource from the B Corp Marketers Network, published on B the Change.

Defining Ownership of Social Digital Responsibility

The lists above cover a lot of ground but with a single common thread: each tactic promotes the responsible use of digital technologies to build and strengthen positive, productive relationships between society and your organization.

Because social digital responsibility practices impact so many stakeholders across disciplines, it is critical to define who owns these practices. However, there’s often no easy answer or single source of truth within an organization, which makes implementing policies and practices challenging.

This requires buy-in from leadership and ongoing vigilance by those who care. If you notice consistent issues within your organization, call them out. Bring attention to them. Devise practical solutions. If necessary, form a coalition. Suggest your company adopt a set of CDR policies.

Hopefully, these posts helps you chart a course toward addressing these important issues. If you have questions or would like to suggest an addition to this post, please drop us a line or message me on LinkedIn.

Responsible Digital Strategy

Learn more about how Mightybytes uses responsible digital strategy to position our clients for long-term success.